There is a moment many creators quietly recognize: you have an idea for a piece of music, you can almost hear it in your head, but turning that idea into something real still feels like a separate profession. Tools promise shortcuts, but most still require technical translation. When I first tried an AI Music Generator, what stood out was not just speed, but how directly it responded to intent. It felt less like operating software and more like describing something that already existed.

That shift matters because it reframes music creation from execution to articulation. Instead of asking “how do I build this,” the question becomes “how clearly can I describe what I want.” And that small change has surprisingly large implications.

How Language Becomes Structured Musical Output

From Descriptive Input To Musical Parameters

At the core of the system is a translation layer. When a user writes something like “slow emotional piano with cinematic atmosphere,” the platform is not treating that as vague inspiration. It maps each part into structured musical variables.

- “Slow” becomes tempo

- “Emotional” influences harmony and chord progression

- “Cinematic” shapes arrangement density and instrumentation

In my testing, this mapping is where most of the perceived “intelligence” comes from. The output is rarely random. It tends to follow a consistent internal logic tied to how language is interpreted.

Why Text to Music Feels Different From Traditional Tools

What makes this experience distinct is the absence of intermediate steps. Traditional workflows often require switching between multiple tools: DAWs, sample libraries, MIDI editing. Here, the layer of abstraction is removed.

The idea of Text to Music is not simply about convenience. It fundamentally changes the interaction model. You are no longer assembling music piece by piece; you are guiding a system that composes holistically.

How Structure Emerges Automatically

Another noticeable behavior is how the system organizes songs into recognizable sections:

- Intro

- Verse

- Chorus

- Transition elements

This happens even without explicit instruction. It suggests the model has internalized common song structures, which helps outputs feel more complete rather than fragmentary.

Understanding The Multi-Model Generation Approach

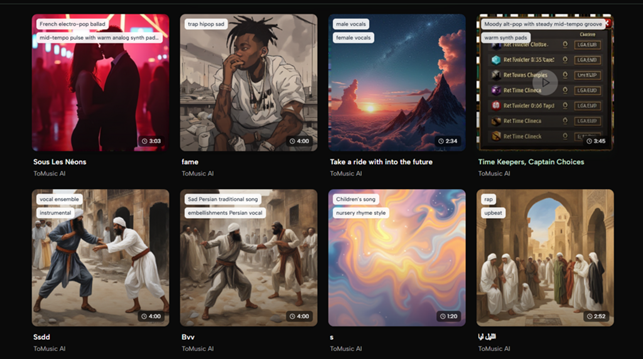

Why Different Models Produce Different Results

One aspect that becomes clear over repeated use is that not all outputs are created equally. The system relies on multiple generation models, each tuned for slightly different goals.

- Some emphasize vocal clarity and expression

- Others prioritize arrangement complexity

- Some handle longer compositions more reliably

Switching between them is less about “better or worse” and more about alignment with intent. In practice, I found that certain prompts consistently performed better under specific models.

Consistency Versus Exploration Tradeoff

There is an interesting balance between predictability and variation. On one hand, similar prompts tend to produce structurally coherent results. On the other, each generation introduces subtle differences.

This makes the workflow feel closer to iteration than control. You guide, observe, adjust, and repeat.

How Lyrics to Music AI Shapes Vocal Integration

Aligning Words With Rhythm And Melody

When lyrics are introduced, the system performs an additional layer of alignment. Words are not simply read over a track; they are integrated into rhythm and phrasing.

The Lyrics to Music AI process appears to handle:

- Syllable timing

- Stress patterns

- Melodic contour matching

In practical terms, this means the same set of lyrics can sound dramatically different depending on style and prompt context.

Why Context Changes Interpretation Of Lyrics

One detail that becomes obvious after experimentation is that lyrics are highly sensitive to musical framing.

A line that feels introspective in a slow acoustic setting may feel theatrical or exaggerated in a high-energy arrangement. The system does not treat lyrics as fixed meaning—it adapts them to musical context.

A Practical Walkthrough Of The Creation Flow

Step One Define A Clear Musical Direction

Start with a short description or lyrics. Clarity matters more than length. Specific emotional or stylistic cues tend to produce more consistent results.

Step Two Adjust Style And Output Preferences

Choose the general style, mood, and whether vocals are needed. This step defines the boundaries within which the system generates.

Step Three Generate And Evaluate Variations

After generation, listen critically. It is common to refine prompts slightly and regenerate multiple versions before arriving at a satisfying result.

This three-step process is simple, but the iteration loop is where most of the creative control emerges.

Comparing AI Music Creation With Traditional Workflows

| Aspect | AI-Based Creation | Traditional Production |

| Entry Barrier | Very low | High |

| Time To First Result | Minutes | Hours or days |

| Technical Knowledge | Minimal | Extensive |

| Iteration Speed | Rapid | Slower |

| Creative Control | Prompt-driven | Manual precision |

The table highlights a broader shift. The advantage is not just speed, but accessibility. And the tradeoff is that control becomes indirect, mediated through language rather than manual editing.

Where This Approach Works Best In Practice

Content Creation And Short-Form Media

For creators producing frequent content, the ability to generate unique background music quickly is particularly useful. It reduces reliance on stock libraries and avoids repetition.

Early-Stage Idea Exploration

Another strong use case is sketching ideas. Instead of building demos from scratch, users can test multiple directions rapidly and refine later if needed.

Lightweight Commercial Use Cases

For smaller projects—ads, social campaigns, prototypes—the balance between speed and quality often feels sufficient without additional production layers.

Limitations That Still Shape The Experience

Dependence On Prompt Quality

Results are closely tied to how well prompts are written. Vague descriptions can lead to generic outputs, while overly complex prompts may produce inconsistent interpretations.

Iteration Is Still Required

Despite automation, first outputs are rarely final. Multiple generations are usually necessary to reach something that aligns closely with expectations.

Fine Control Remains Limited

Compared to traditional production tools, adjusting specific musical elements after generation is still less flexible. Control happens before generation rather than after.

Why This Feels Like A Shift In Creative Roles

What stands out most is not just the technology, but the change in how creators engage with it. The role shifts from builder to director.

Instead of assembling each musical layer, you define:

- Mood

- Structure

- Intent

And the system fills in execution details.

In that sense, the tool is less about replacing creativity and more about redistributing where effort is spent. It reduces the technical barrier, but increases the importance of clarity in thinking and description.

How This Changes The Meaning Of Musical Skill

There is an emerging distinction between two types of skill:

- Technical production ability

- Conceptual articulation ability

Platforms like this lean heavily toward the latter. Being able to describe a sound precisely becomes just as important as being able to produce it manually.

Looking Ahead At The Direction Of AI Music Systems

If current trends continue, we may see:

- More precise prompt interpretation

- Greater consistency across generations

- Better post-generation editing capabilities

At the same time, the core interaction model—describing rather than constructing—will likely remain central.

The interesting question is not whether these systems will replace traditional tools, but how they will coexist. For some workflows, they will become the starting point. For others, they may remain a complementary layer.

Either way, the boundary between idea and execution is becoming thinner. And once that boundary shifts, the entire creative process tends to reorganize around it.